A 🐉 Dragons Map of AI Advances for Gaming

In which we map the parts of the intersection of recently AI advances and gaming I find intriguing, listing those looking worthy of attention and exploration.

In ancient times, mapmakers would mark unknown territories with hic sunt dracones or 'here be dragons'. These dragons weren't just symbols of danger; they also hinted at hidden treasures.

This idea captures well how I tend to approach the unknowns around research and exploration – filled with potential yet unknown risks. Exploring new frontiers, whether in technology or any field, often feels like navigating these uncharted territories.

While we might not always need such exploration to find valuable insights – sometimes, we might just stumble upon them – this process of mapping our desires to venture into the unknown feels crucial: it's not just about sharing my thoughts with others; it's also about clarifying my own thoughts by writing them down.

Still, this is not a full map of the ‘AI for Gaming’ space: such a thing feels too ambitious and too volatile at this time. There is simply too much going on.

This is merely a list of the dragons: what I find intriguing and worthy of attention and further exploration at this time.

Some of them are fuzzier than others, some of them are more actionable than others. It is meant to evoke thoughts and questions rather than providing answers or guide exploration directly.

🐉 Dragon #1 - Oracles vs. Muses

When we interact with computers, we act like asking a knowledgeable person for answers. We are in oracle mode, much like how we use search engines: we ask a question and get a direct answer or links to where we might find it.

In the past, we've often approached AI tools, like language models and chatbots, expecting them to work the same way. But sometimes, this leads to disappointment. These tools are more like storytellers—they're great at sounding confident, but they can’t really tell the difference between fact and fiction.

However, things start to shift when we change our approach. If we begin using these AI tools not just as answer-givers but as 'creative companions,' we unlock their true potential. Let's call this the 'muse' mode. In this mode, these AI partners aren't there to dictate what we should write or which code to execute. Instead, think of them as knowledgeable assistants who've read every book, every documentation and API, and can even mimic the styles of great poets and artists.

Many concerns, like the AI making up things (what we call 'hallucinations'), start to lessen when we stop seeing these tools as oracles and more as muses. This shift is already happening in the tech world. For instance, Microsoft and Github use the term 'copilot' for their AI tools, hinting at this partnership approach. Google, known and valuable (monetizing our firmer intent in ‘oracle’ mode), might find this shift challenging, as it might change how the value of users’ attention with ‘muse’ posture is perceived by advertisers.

My personal experience here suggests that the use of copilots for coding feels like a form of superpowered “rubber ducking” which forces me to think more clearly because I need to communicate my intention to the LLM in order for it to provide me with guidance and suggestions.

It is rare and almost unnecessary that the coding copilot gives me the right code that will function as I want it without any change. I don’t expect it and I don’t find it necessary: what’s important is that the LLM is there to guide me and suggest things and have a Socratic dialog with me at any moment of the day, without having to sacrifice or interrupt colleagues in doing so.

The productivity is not increased by the generation of the code, I ultimately do that, but the dramatic improvement in the augmentation of the creative flow state (for myself and for my colleagues, which I don’t have to interrupt).

I have written more about this in The chasm between probabilistic and deterministic tooling as well as The Intriguing Cognitive Puzzle of Fabulist AIs.

🐉 Dragon #2 - The Utility of Forced Averages

Machine Learning can be seen as a smart way to summarize lots of information. It looks at a vast amount of data and finds a more compact way to represent it, keeping the most important parts for a specific task. Generative AI, a branch of machine learning, takes this a step further. It uses these summaries to create new, original content under user guidance, even if that content wasn't in the original data it learned from.

To work well and avoid legal issues, like copyright violations or privacy intrusions, these AI models are designed to avoid memorizing the data they are trained with. Instead, they focus on capturing general patterns or 'averages' of the data they've seen. It's a bit like remembering the gist of a story, not the exact words.

But here's the catch: this approach of 'forced averaging' might affect how creative and original these AI tools can be. Since the arrival of tools like ChatGPT, the idea of 'generative' AI is shifting. It's moving from being seen as wildly creative to creating things that are unoriginal. The question is, is this lack of originality because of the 'averaging' process, or simply because these tools and their users are still new?

This 'averaging' requirement might actually be a good thing not just for generalization. It encourages us to see these tools as augmenting human abilities, not replacing them. This is particularly reassuring in creative fields, where people often worry about AI taking over jobs. Instead of seeing GenAI as a competitor, we can view it as a powerful assistant, taking care of the routine tasks and freeing us to focus on truly creative endeavors.

My personal experience here revolves around the use of AI tools for the creation of text, code and images.

As I am not a visual artist, all the diffusion models I tried (DALL-E, Midjourney and Stable Diffusion) are able to do a far better job than I could in almost all situations but I have found it frustrating and difficult to guide them to the mental image that I have in my head. Normally it’s “good enough” and “better than nothing” but not something that would replace the need for an artist if I needed something more specific and curated.

For the creation of text, I found ChatGPT4 very useful to polish and correct the tone and style of prose. I have used it also in this article. This feels especially valuable for non-native English speakers like myself as it is often difficult to appreciate the nuance of style in a language learned later in life and outside of a higher education education system (like in my case). Averaging for style polish is useful because we want adherence to form to be centered around expected uses and lack of originality here is a feature.

As for code, the fact that the code is “unoriginal” is also a benefit. I want it to give me the most common way to solve a problem so that it maximizes its readability for those that will have to maintain it after me.

See also ChatGPT Is a Blurry JPEG of the Web in the New Yorker, as well as To Own the Future, Read Shakespeare in Wired.

🐉 Dragon #3 - Architecture vs. Scale

In the world of advanced AI, there's an ongoing debate about the best path to what's called 'artificial general intelligence' or AGI, which is AI that can understand, learn, and apply knowledge as well as a human brain. There are two main views in this debate.

One group, including DeepMind and Google, thinks we need to develop completely new types of AI architectures to truly mimic the human brain. The other group, led by organizations like OpenAI and its related companies, believes that making our current AI systems larger is enough to eventually give us AGI.

Those who focus on scale argue that when an AI system reaches a certain size, about 3.5 trillion parameters, it'll naturally become as capable as the human brain. For context, it's rumored that GPT-4, a well-known AI model, has around ~1 trillion parameters. But increasing the size of these models faces technical challenges. We're reaching the limits of current technology, and most of the advanced manufacturing for advanced silicon fabrication (needed for AI compute acceleration) is concentrated in places like Taiwan and South Korea, which could face political risks in case of a Chinese invasion of Taiwan.

On the other hand, there are reasons to question the scale-focused approach. For instance, open weights LLMs (like LLama, Falcon, Mistral and Phi) show that you can start with a large, general-purpose model and fine-tune it for specific tasks without needing massive computational power. This approach is showing promising results and is less demanding in terms of resources. The HuggingFace Open LLM leaderboard shows impressive improvements over time for these models, although one has to be mindful about test contamination given how much incentives there are to publish top scores to receive notoriety, attention and funding.

Also, a recent paper (which won best paper at NeurIPS 2023) challenges the idea that LLMs exhibit traits that emerge spontaneously out of training simply by scale, calling it instead a “mirage” due to the non-linearity of the metrics used to evaluate performance.

While companies like Google and DeepMind have been somewhat taken aback by the success of AI tools like ChatGPT, it'll be interesting to see how their upcoming models, like Gemini Ultra, compare. So far, GPT-4 is the leader in terms of usefulness and value, but the field is rapidly evolving.

In this space I’m fascinated by activities like the BabyML challenge that explicitly push against the “scale is all you need” stance trying to imitate the research fervor generated by the ImageNet competition which kicked off the deep learning renaissance.

I’m intrigued by the idea that better training data might account for performance improvements in LLMs performance as much (if not more) as improvement in architecture. Is it possible to build models that learn to learn with less data? Thus bootstrapping themselves in a compounding “learn to learn” way the way humans appear to be doing?

Focusing on training LLMs purely on language with smaller models and smaller amounts of highly curated training data (like TinyStories) like we do for children might provide the opportunity for faster try/fail cycles of architectural innovation without requiring the massive amount of capital investment (compute and energy) that the current LLMs require.

See also Tiny Language Models Come of Age in Quanta Magazine.

🐉 Dragon #4 - Training vs. Fine Tuning

The remarkable thing about ChatGPT is how it lets anyone 'program' it by simply explaining what they want in plain English. This breakthrough isn't just a triumph in machine learning; it's a revolution in consumer technology. It has opened up the ability to 'train' an AI model to virtually everyone who can read and write, not just tech experts.

This user-friendliness comes from what we call 'zero-shot' (no examples needed) and 'few-shot' (only a few examples needed) learning. These methods allow ChatGPT users to tailor the AI for specific tasks without needing any background in machine learning.

Since GPT-3 showed the emergence of these capabilities in 2020, research and activity in this area have exploded. The cutting-edge work now combines several approaches: learning from large amounts of data to understand underlying patterns, refining how the AI understands and follows user instructions, and incorporating feedback to improve safety and the quality of results.

One of the biggest shifts in AI development has been the division of the model creation process. The initial training phase, which is complex and expensive, creates a foundational model. Then, there's a much simpler and cheaper 'fine-tuning' phase, where the model is adjusted for specific tasks. This shift has moved the focus of innovation from creating the base models to fine-tuning them. However, this area is still new and comes with a lot of excitement and hype, which can be a risky mix.

My personal experience in this area revolves around trying to fine tune LLMs for coding copiloting for Rust (which we use in my current job to program backend services) and Unreal Engine (which we currently use for programming the game logic).

Unreal Engine is programmed in C++ but its source code is not open source and there is very little out there that can be ingested and used for fine-tuning by existing players in this space. Unreal API and architecture are somewhat special and existing coding copilots struggle to suggest code that matches the aesthetic appeal of game developers in this space.

I’ve tried some RAG approaches but with little success. LoRA feels like the most promising method to try in this space but I haven’t had enough cycles (and access to computational resources) to go down this path yet.

I’ve written more about the mysteries of the emergence of “in context learning” in The In-Context-Learning Puzzle.

🐉 Dragon #5 - System 1 & System 2

We can divide human cognitive abilities into two classes: one is quick, instinctive, and often unconscious; the other is slow, deliberate, and logical. In psychology, these are known as System 1 and System 2 thinking, respectively.

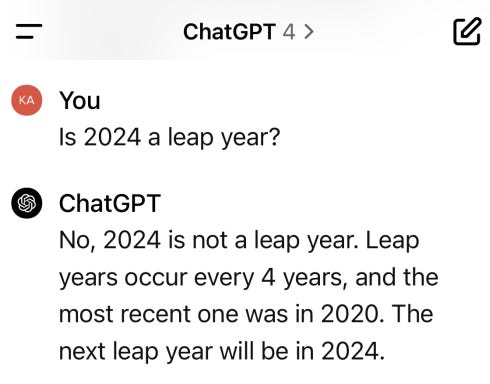

We can look at fine-tuned LLMs through this lens. These AI models have brought something new to the table: they're more than just stochastic parrots, but their grasp of the world feels still quite shallow. Their ability to think logically on their own, without any help, is limited, as we sometimes see in their amusing mistakes:

Mostly, LLMs like ChatGPT seem to operate in System 1 mode – quick and intuitive. But what's missing is their natural ability to switch to System 2 – the slow, thoughtful, logical way of thinking. We've seen glimpses that LLMs can do this type of thinking, but only when we guide them with specific instructions or examples. It's like having to explain in English how to think for each task, which feels like a workaround rather than a real solution.

This challenge is at the forefront of current LLM research. For example, a recent study introduced a new approach called 'Tree of Thoughts,' suggesting new ways to improve LLMs' reasoning abilities. Both OpenAI and DeepMind are rumored to be exploring this frontier (see Q*), but there's a lot of uncertainty and excitement in this area.

Before ChatGPT, the most exciting advances in AI problem-solving came from reinforcement learning – the technology behind AlphaGo and AlphaFold's remarkable achievements in games and science. These successes showed how AI could solve problems once thought beyond the reach of computers.

But this raises a question: time delays in human responses suggest that System 1 thinking has relatively few “forward passes” in our neural networks. System 2 thinking is slower, more elaborate and more effortful. Is it just many more forward passes or is there something materially different to how System 2 thinking operates in the brain? Is obtaining System 2 thinking a necessary condition for the utility of GenAI in gaming or it’s just a nice to have?

I’ve written more about this in AI-centric Computational Architectures.

🐉 Dragon #6 - Directed vs. Procedurally Generated

Creating a video game that feels alive and captivating is a complex task, especially when developers try to use procedural content generation – a method where game elements are created automatically by algorithms rather than being handcrafted by artists and designers. This approach, though innovative, is tough to execute well. It requires intricate coding, fine-tuning, and quality assessment, often more so than traditional game development methods.

Consider a game like 'No Man's Sky,' which took years to refine its procedurally generated content to the point where it felt truly engaging and added depth to the game. It's a rare success in a field where such techniques can often seem more like shortcuts due to budget or time constraints rather than genuine enhancements to gameplay.

Now, imagine if we could apply generative AI not just in the game development process but also in real-time during gameplay, without constant oversight from artists or designers. This opens up fascinating possibilities but also raises several questions:

How do we apply artistic vision and game design principles to content that's automatically generated?

What does it mean to 'fine-tune' a generative model to, say, create a level map or an alien animal? What kind of data would we need for this, and how much?

Who is responsible for gathering this data, and how can we assess the value of this effort before we even start?

How does this shift in technology affect the traditional workflow of game development, especially regarding the allocation of resources?

These questions highlight the balance between maintaining the 'soul' of a game – its artistic and design integrity – and embracing the efficiencies and innovations offered by procedurally generated content through AI.

🐉 Dragon #7 - AI Players for Testing

Testing video games is a unique challenge. Unlike standard software, games offer a vast range of possibilities for player actions, making testing both complex and costly. Moreover, the repetitive nature of game testing – like checking the main gameplay routes, known as 'golden paths' – makes it a dull task for human testers, who might not perform well with such tedious work and not enjoy doing it.

Unlike other types of software, games are hard to break down into smaller, testable parts. They often have many interconnected components, optimized for fast performance and quick updates rather than easy testing. This can lead to situations where a game works perfectly in one setting or when each module is tested independently but fails under different conditions, like when it's fully integrated or played in different conditions.

Traditionally, human players are hired to test games, systematically following scripts to ensure the core mechanics work smoothly. This is vital, especially for online games where player data is spread across different systems with varying connection speeds.

Could AI, particularly reinforcement learning (RL) – known for teaching AI to play games like Atari, Starcraft, and Dota 2 – could help with game testing? Could RL agents be trained to play games realistically enough to assist human testers, freeing them from the monotony of repeating actions during development and instead allowing them to focus on more creative and rewarding testing strategies?

The challenge is to make these AI agents both autonomous and accurate enough to adapt to changes in the game without causing unnecessary alerts.

Research in the RL space has slowed in the last few years as research excitement around ML has moved from RL to transformers and LLMs. In 2013, DeepMind’s agents learned to play most Atari games but Montezuma’s Revenge and Pitfall because their reward signals were simply too sparse. This fascinating interactive browser game shows what a video game looks like to an RL agent that doesn’t have common sense driven by visual clues (each pixel sprite has been scrambled).

A team at Uber in 2021 managed to find a method for “hard-exploration” problems in RL which managed to learn how to play these games but it received surprisingly little attention. DeepMind managed to solve this problem in 2017 but using human demonstrations. The systems learned how to explore by watching players play the game.

Can these techniques be adapted to be used in today’s video games? And can the integration cost be minimized to connect with existing game engines?

I find myself intrigued by the opportunity of learning to test video games with AI agents.

This is the kind of ‘foundational system’ that a video game publisher can provide to development teams or studios to reduce their QA costs and augment the quality of the game without sacrificing iteration time.

There are no off-the-shelf solutions in this space that I know of and one can’t simply pipe each video game frame into a multi-modal foundational model as-is and expect it to work. The frontier of LLMs and RL is a very active area of research for both fine-tuning and System 2 thinking and that’s at the frontier of the possible right now.

🐉 Dragon #8 - AI Players for Bootstrapping

An expansion of the previous point is the ability to use AI bots as players to “fill up” an online game when not enough players are present.

This is even harder than before because while QA doesn’t have real-time needs, fake AI players need to operate and behave at the speed of other players. Solving the QA automation problem feels like a necessary condition to achieve value in this space but not a sufficient one unfortunately due to these additional low-latency constraints.

Playing against “artificial players” is a very established part of video games, but these AIs are generally crude and unsophisticated techniques which have not improved much over the last several decades.

In many cases, these solutions come as part of the gaming engines and work relatively well. When they work well, this will not change much and for good reasons.

But game developers are starting to ask themselves what it would take to imbue their player AIs with the kind of nuance and sophistication exhibited by LLMs. This gap is very large and not something that a game developer can bridge during their routine development schedule.

On the other hand, the ML research community is interested in training AI agents to win against human players (or other AI methods) but they are not interested in helping players obtain a more gratifying and entertaining experience during gameplay.

These incentives are necessarily aligned which suggests that some adaptation and bridging might need to be performed to turn winning AI strategies and algorithms into appropriate support systems for augmenting gameplay.

🐉 Dragon #9 - Bridging Tooling Gaps

Video game development is rapidly embracing various AI tools, given their clear opportunities for benefit. For example, machine translation is revolutionizing how games are internationalized, making them accessible to a global audience. There are also plugins emerging that integrate AI into artists' creative processes, like generative fill in Photoshop. Even the characters we interact with in games, known as NPCs (Non-Player Characters), are becoming more lifelike thanks to AI (see InWorld and Convai).

However, seamlessly incorporating these AI solutions into the established workflows of game studios may not be straightforward. Let's look at a few examples:

Machine Translation for Internationalization - It's not just about translating the words in a game; it's about capturing the game's narrative context (which is difficult to obtain without playing). Plus, the translated text needs to fit within the game's user interface, which can vary across different platforms or require resizing to fit content in different languages. This may require AI that can understand context and even have visual awareness and context on how the UI is coded.

Interactive NPCs - While AI can make these characters more interactive, there's a risk of them feeling 'soulless' or like mere fillers in the game. The current limitations in AI's logical reasoning (what we discussed as 'System 2' thinking) and potential delays in response time might stop these characters from feeling genuinely intelligent and engaging. Also, the cost of running such advanced AI in real-time could be prohibitive.

As the gaming industry continues to experiment with these GenAI tools, the big question remains: can they be integrated effectively into the complex and nuanced process of game development?

The hype around ChatGPT and Midjourney catalyzed a lot of investment into tools that can be used for video game creation, both during development and at runtime.

But video game studios are generally risk-averse when it comes to putting risky technologies on the critical path of games in development. Startup offerings are perceived as especially dangerous to rely on.

This leaves a gap that is difficult to bridge by either the game development teams/studios or by the tool makers themselves. This gap can become an opportunity for other entities in a supporting role to shape and augment.

🐉 Dragon #10 - Marginal Content Output

Non-interactive and interactive entertainment face the same organizational challenges of coordinating a lot of very diverse talent toward the common goal of entertaining consumers.

Many very successful AAA games can be perceived as “interactive films” in which the adventure is somewhat scripted but some of the content and experience is generated by the interactivity of the player (for example, Uncharted). They end up costing just as much (if not more) of their non-interactive counterparts and they share similar financial risk structures.

The ability to play with other people changes this equation substantially because other people’s play provides additional content during their own entertainment. This is a symbiotic relationship in which entertainment for one player becomes content for the other player, at very little cost for the game developer (effectively, just cloud and maintenance costs). This is how games such as Fortnite and League of Legends operate and can be so massively profitable despite being free to play.

In this modality, game design changes from shaping the player’s experience to shaping the opportunity for the emergence of that emergent symbiosis between entertainment and content creation. This is why the marginal content output of a given member of the 16 people (!) Clash Royale team is much larger than each of the hundreds of crew members working on a single additional episode of Game of Thrones or an additional chapter for Uncharted.

There are both opportunities and risks here for Generative AI as it can be seen as a way to “improve marginal content output” but without impacting the existing labor or organizational structures. This feels shortsighted and unworkable because designing content for consumption and designing content for marginal content emergence are far enough away from each other that it’s highly unlikely that they can be bridged simply by reducing the cost of curated content creation (or replacing parts of this content creation with GenAI tools wholesale, like AI-generated NPCs)

Yet, it might be possible for GenAI to reduce this gap (the tools providing an emergence boosting of sorts) and thus help organizations that already mastered content production to approach designing for emergence with more curiosity and a more open mind.

Studios trying to shift their stance toward more sustainable content creation practices will likely find it a difficult transition which requires changing some of the organizational muscles which brought them to where they are today.

The introduction of Generative AI tools might be an opportunity to bridge these gaps but it could also rubs against the curation and artistic control needs that feels necessary to hit the quality and vision consistency promise implicitly made to both players and investors.