The In-Context-Learning Puzzle

In which we discuss how large language models' ability to perform some tasks from natural language appears to come out of nowhere and nobody really knows why.

Last week I stumbled upon “A survey of Large Language Models” paper which is very long but gives a very nice overview of the state of the art around LLMs.

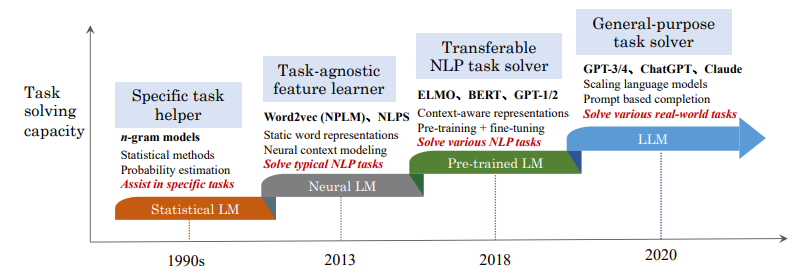

It contains this picture which I believe does a great job summarizing the evolution of progress around NLP (natural language processing).

They identify the transition between the “pre-trained LM” and the “LLM” phase with the paper “Language Models are Few-Shot Learners” from OpenAI that first discovered “in-context learning” (ICL) in GPT-3.

I write “discovered” on purpose: the extremely interesting thing about ICL in GPT3 is that the neural architecture between GPT2 and GPT3 is almost identical. The only architectural difference is that GPT3 alternates dense and locally banded sparse attention patterns in the layers of the transformer, similar to how they did it in their sparse transformer paper. This is simply a memory optimization they introduced to be able to train such a big model despite knowing that it would introduce a slight performance hit from it.

The most significant difference is the size (bigger context 1024 → 2048, more embedding dimensions 1600 → 2048, more attention heads 20 → 96, and more encoding layers 48 → 175) and trained on a lot more data (5B —> 300B tokens). GPT3 behaves differently than GPT2 despite doing, conceptually, the same thing. How is this possible?!

The paper calls it a “phase transition”: the same stuff in different setting emerges different behaviors (like, for example, adding enough temperature to liquid water turns it into steam and makes it compressible). ICL is what ultimately created the LLM craze we’re experiencing today.

So, where is ICL coming from?! At this time, apparently, nobody knows for sure.

These models are becoming so large that studying them directly (like by debugging what each matrix multiplication does) is impractical. Just to give a sense of this, many matrices involved are big enough that if we used a single pixel to depict a single cell in a 2d matrix on a hi-resolution 4K computer screen we might need tens of thousands of them! And it would look like random noise fluctuating at hundreds of frames per second! It makes looking at the Matrix via code trivial in comparison

So people have been trying to study the properties of these LLMs to see what they are or aren’t able to perform to try to guess how they do it. As Gordon Brander recently said to me in a recent discussion on the topic of agency in LLMs, “software is getting softer”. The boundary between silicon systems and biological systems is blurring.

So, what do we know about ICL so far? Here is a list of the things I’ve been able to piece together (also looking at this other paper which is specifically surveying ICL).

Context as working memory

I recently came across a quote that I found very thought provoking:

A human with paper and pencil is smarter than one without.

which appears to be rephrased from an Einstein’s quote. I wrote about this in my previous post but it’s worth repeating here: the way we use LLMs in chatbots let them use the context as a “working memory”. It reminds me, vaguely, how a Turing Machine uses tape.

It is possible that by asking the LLM to “show your work” we nudge the LLM into exposing the inner workings of its reasoning and this creates a system in which the intelligence is not really encoded in the way the model operates but it’s in the interaction of the prompt that nudges into this posture, the context used as working memory and the auto-regressive nature of the operation (which self-reinforces both the narration of the task and its completion).

This is similar to the replication properties of a virus: they are not directly executed with machinery the virus possesses; they are an emergent property of the system composed of the virus and the cunningly hijacked replication machinery of the infected host.

Task Recognition vs. Task Learning

This paper shows how LLMs are able to use learning and recognition simultaneously to accomplish an ICL task. Intriguingly, the ability of task recognition is easier to obtain: even a relatively small LM with only 350M parameters can exhibit this ability. It claims that task learning can only emerge for LLMs with at least 66B parameters. If this is true, this could have a dramatically chilling effect on ICL research in non-industrial settings (such as open source or academia).

This paper supports that finding by experimenting with how LLMs are affected by semantic priors vs. input-label mappings. They do so by replacing in-context examples with flipped labels (negative vs. positive) and assigning completely unrelated or invented labels. Small language models ignore the flipped labels and just act on semantic priors from pre-training. LLMs instead are able to override the semantic priors when presented with in-context examples that contradict the learned priors. This confirms that LLMs operate significantly differently than regular language models.

The training data influences ICL behavior

This paper shows that the transformer architecture works together with particular properties of the training data to drive the emergent ICL behavior. They show that ICL emerges in a transformer model only when trained on data that includes both burstiness and a large enough set of rarely occurring classes.

This paper somewhat confirms this finding showing that repeated data in the training set causes a disproportionately large performance hit to copying, which then appears to be a necessary mechanism for the emergence of in-context learning.

Architecture matters…

The same paper above also shows that recurrent models like LSTMs and RNNs (matched on number of parameters) were unable to exhibit in-context learning when trained on the same exact data, jabbing that “attention is not all you need – architecture and data are both key to the emergence of in-context learning.”

… while Precision does not

This paper shows how even when heavily quantized (up to just using 4 bits per parameter) the ICL behavior still emerges. This is significant for inferencing and finetuning (explaining potentially how it’s possible for smaller open weight models to still wow its users).

ICL does $thing

This paper suggests ICL is doing gradient descent. Here, each layer represents a new step of descent suggesting that architectural depth is a fundamental aspect of this kind of learning method.

This paper suggests ICL is doing implicit Bayesian inference where the pretrained LM implicitly infers a concept when making a prediction. The ICL ability emerges when the pretraining distribution follows a mixture of hidden Markov models.

What can ICL learn?

This paper shows how a transformer can be trained to in-context learn complex function classes such as sparse linear functions, two layers neural networks and decision trees. It’s anyone’s guess if more “working memory capacity” of the model (in terms of context x embedding dimensions) would result in being able to learn more sophisticated things.

Transformer Circuits

Anthropic seems to be interested in “why ICL emerges” as well which doesn’t surprise since they are some of the people that first experienced it with GPT3 at OpenAI. They have published a theory and a follow up paper on “transformer circuits” although I have yet to fully digest its implications (which I’ll probably write about in the near future).

My Conclusions

Like many, I’m intrigued by this ICL behavior because it allows us to effectively fine tune an AI by talking to it in natural language which very much feels like the beginning of AGI, at least at the System 1 level.

I’m also intrigued by the System 2 limitations exhibited by ICL. Is this because of lack of context capacity? Or not enough depth in the network to perform enough “gradient descent” steps on the context?

Or is there something inherently missing in the architecture (like this obscure paper seems to be hinting)?

My biggest concern is that if one needs an exaflop to research these questions, progress will be slow because it will be concentrated in the hands of those able to harness so much computational power (that is, cloud providers or the startups they fund with capital earmarked for computational expenses on their own clouds).

The end of Moore’s law and the concentration of high tech silicon foundry in east Asia makes things weird: TSMC in Taiwan and Samsung in South Korea are effectively the only games in town with enough transistor density required to crank matrix-multiplier accelerators at reasonable flop/watt levels. The threat of war in the region is only make things harder and more expensive.

But what if ICL is not really a property of size but a property of something else? What if transformers can be made to emerge this property but in a dramatically low computational cost (see, for example, this paper for something that is offering an alternative to transformers in this space)?

I don’t have the answers but I find myself intrigued by these questions.