The Multi-Player Factorio Problem

In which we draw a parallel between the latest trends of agentic software engineering and the complexities highlighted by playing factory-simulation video games with multiple players

The world of software development has changed dramatically since the release of Claude 4.0 last year. It will be a year next month. Everything that was true a year ago needs to be re-evaluated now.

My read of this dynamic is that even if LLM improvements stop right now, we’re in a world in which machines code better than I can. This has happened before: compilers encode machine instructions far better than I can. I haven’t had to “write assembly by hand” since the 90’s and I don’t know how many of my colleagues actually ever did write assembly language by hand.

I fully expect that “reading code” will be as common as people looking at the assembly spit out by their compilers. I don’t know when that’s going to happen, but I am now convinced that’s the future we’re racing toward because it’s already here, just very unevenly distributed.

So, with that assumption, I want to spend some cycles in this post trying to understand what that world will look like, what might be different and what might stay the same.

Jevons Paradox

Let’s start with the elephant in the room: will the AIs take our jobs? No, I don’t think so. The history of computer programming is riddled with attempts to get rid of human programmers (see here for a great historical account of them) but not only they fail, but as they make it easier to write software, more of it gets written, which ends up requiring more people to keep it running (this is Javons Paradox).

It’s not all roses tho: I’m sure there will be a period of uncertainty in which headcount budgets will be traded for token budgets (which are far more elastic and have far less moral consequences to downsize) but I don’t think that will last long. This reminds me of the “outsource to India” craze of the 90’s which just evaporated once executives realized that saving on some salaries and multiply coordination headwinds for everyone else wasn’t a good trade.

I suspect the same will happen here: it will far easier to start something (even CEOs will prompt software into existence and will use that to jumpstart things and apply pressure), but the cost of keeping it running will continue to be the submerged part of the iceberg.

Compilers automated the creation of machine code, but that didn’t make programming go away, but rather the opposite: it made programming explode and resulted in much bigger and more complicated programs. AI tools will do most of the code which means we will be able to deal with much bigger and sophisticated programs.

Software acts as a gas, not as a liquid. AI tools expand the human reach, what humans can dare to attempt and as a result, they reach further, they aim higher, they build bigger…. which also increases the risks, the failure modes, the complexity… which also increases the blast radius of failure, the cost of maintenance… which will likely result in the same amount of human labor needed to keep it all from falling apart and aligned with the interests of their employers.

The Software Factory

Very few projects dare shipping code without ever looking at it, but projects like OpenClaw and Gas Town are starting to do exactly that. They look nuts to most people today, but I feel that’s showing us what the future looks like: swarms of agents rubbing against each other toward a stable outcome that passes some dictated constraints (specs or tasks or tests, doesn’t matter as long as it’s generally deterministic in nature).

This has one important side effect: every software engineer, in this posture, is effectively forced to be a Tech Lead (TL), organizing the work of other actors with imperfect control instead of doing the work themselves. I grew up in open source and I learned to organize the labor of others with imperfect control from the start. This was effectively TL bootcamp for me, but for most people the transition from IC (individual contributor) to TL is painful, takes years and benefits from good support system of mentoring and graceful performance management. This all goes out the window here: every IC, at any seniority level, would be forced to lead a small army of agents they can’t fully control or risk being left behind for low impact.

This is a new type of digital divide: not just the access of tokens (pay-as-you-go tools are very new in software development used to flat fees), but even after gaining access to these tokens (say, subsidized by our employers), the ability to delegate and coordinate the work thru other agents with imperfect control is not obvious and might become a dividing factor.

Each IC is effectively the operator of a software factory… one that can outcode any human programmer, even the 10x kind, while they sleep.

Do you know of anyone today who is so good at writing assembly language by hand that they refuse to use compilers and are still employed?

Alignment

Let’s fast forward a little and imagine that every member of our team of 5 is manning their own software factory and each can write 50k lines of code every night while they sleep and recode half of what the rest of the team did the day before.

We gave everyone cars but we didn’t worry about the roads so now we’re stuck in traffic.

We have swarms of robots now that can build what we want… but now the problem moves from what we want to build to what we DO NOT want to build. Or how we can make more targeted changes that speed us up and do not slow others down.

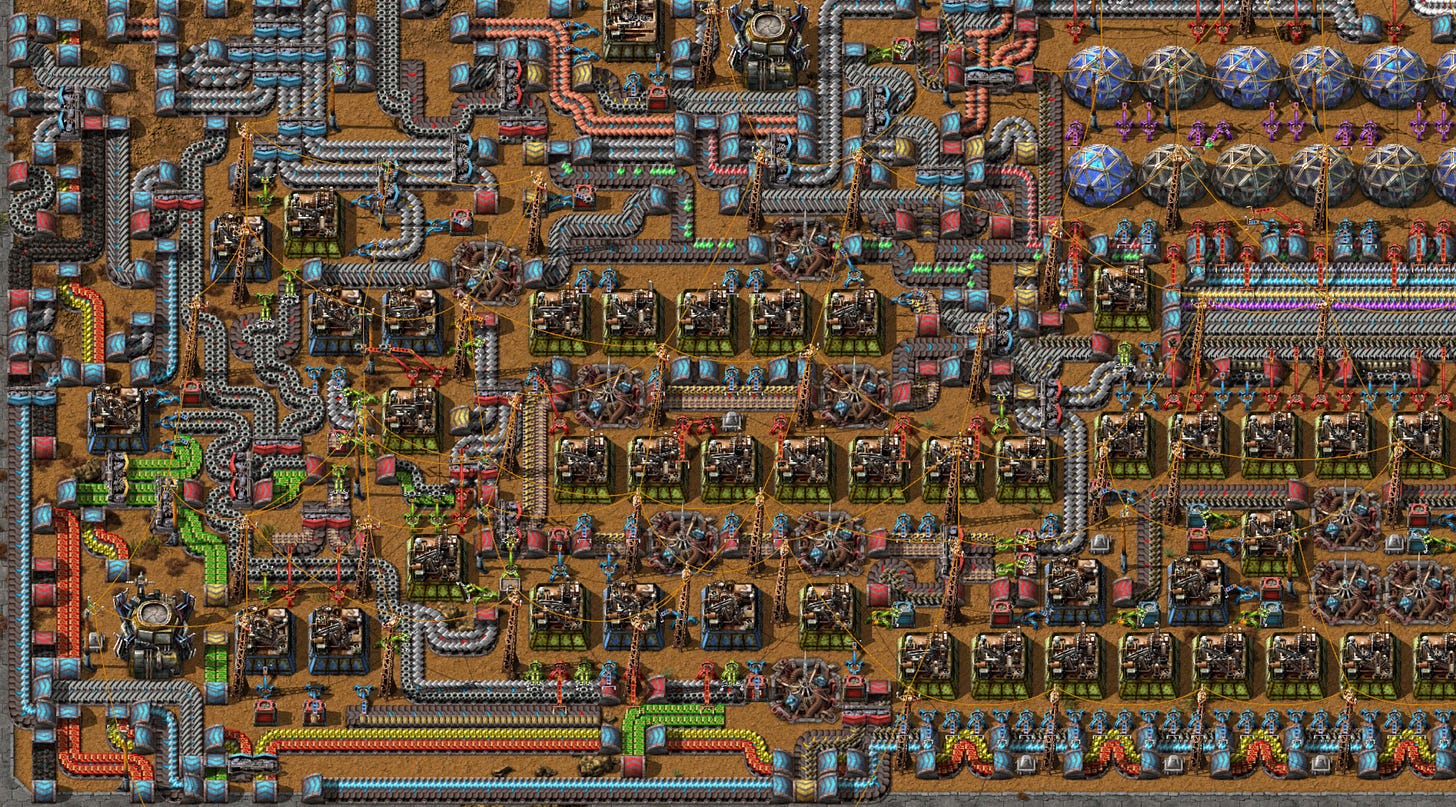

Here, I’m reminded of playing multi-player Factorio.

Factorio is a masterfully designed video game where we’re stranded on an alien planet and we have to build a rocket to escape it. To build the rocket, we have to build all of the tech that makes the rocket and to do so we have to build, manage and oversee a factory to build and research that tech.

The genius of Factorio is the aliens: they come and destroy our factory. So we need to spend resources to defend it. Also, the aliens eat our pollution, so the more factory, the more pollution, the more aliens, the more weapons, the more resources are spent keeping the lights on and less in building the rocket. Our job is not just to build and figure out how everything flows together but also how we can defend it, repair it when damaged and balance the resources we spend building vs. defending vs. repairing.

It is a perfect reproduction of the dynamics of software development: gluing together reusable modules to compose larger modules, having to maintain them from their internal fragility, find out chokepoints, defend it from the erosion of usage and uncertainty coming from changes of scale, allocate resources between new vs. old, paying off technical debt vs. not, allocate resources toward derisking failure vs. furthering our goals.

But the game changes dramatically when we’re playing with somebody else: alignment enters the picture. We need to coordinate to deal with opportunity costs. If I want to spend an hour refactoring the steel plates pipeline, I can’t spend an hour expanding the defenses for the oil fields which is what you think it’s our most urgent priority.

Without aliens, we could just pause and hash out why I think that refactoring the steel plate pipeline is more useful than expanding the defenses of the oil fields, but with aliens around any second spent not improving the factory is a second of disadvantage of aliens feeding on our negative externalities.

I know of a lot of people who love Factorio but don’t like playing it with others, precisely for this reason: alignment is a lot of work and alignment under time pressure is even worse. It stops being fun, it’s frustrating, it reminds too much of real work.

Another thing that’s curious about this is that as we progress thru the tech tree in Factorio, we gain access to robots that can work on our behalf. We can create “blueprints” of pieces of the factory we want to build and the robots do it for us.

This, crucially, makes the alignment cost feel worse. Not only I have to worry about your refactor damaging my plan, but now your robots can do it so quickly I’m not even aware it’s happening until it’s well underway and it’s more expensive to undo than finish.

See where I’m going with this?

My fear is that AI coding agents make “coding” easier but make “software engineering” harder because:

they extend our reach, our appetite and the complexity of the results and

they make coordination between other humans feel much more expensive (because it’s far from the reach of automation and will always be)

To say it in another way:

Without the synthesis process of critique, everyone who vibe coded a thing now has their own mental model, which means they are no longer actually a team.

This kills the project.

Agentic cgi-bin

We are in the “cgi-bin” era of agentic engineering. It’s so fun and easy to get something done, low hanging fruits everywhere, but so hard to know what is good and what will turn out to be a bad idea.

Everyone and their dog is writing their own outer loop (including me, see Schmux). We are having a blast doing it but we are very far away from design patterns consolidating “best practices”.

Still, there are things, a month in and 200k loc clocked on Schmux alone, that I can already see emerging:

“reviewing code” needs to change. There will be too much of it, too many stacked commits and too much about tech stacks we don’t fully understand or feel comfortable with (but agents do). We can either limit code production or roll with the punches and pin agents against each other and have one review the output of the others. We have no idea what works well in this space yet but there are indications that pegging agents against each other with different personas or prompts works well. I expect this trend to solidify into best practices.

“continuous integration” needs to change. We need “agentic CIs” that can run loops over the code and validate it against things like “identify and remove test slop” or “make sure specs and code stay in sync”. We have never had the ability to do “in-depth security review” or “in-depth privacy review” or “in-depth performance review” for every change that lands on the repo, but now we can. How do we square the utility of such automation with the imperfect yet overconfident nature of their findings? How do we evaluate ROI here?

“token budges” will become a huge factor. Curiously, employers (like mine) who give “unlimited tokens” to their engineers might actually be doing themselves a disservice because it prevents the emergence of clever uses of model composition (a sort of “fractional distillation” of cheaper models feeding more expensive models in a sort of agentic hierarchy of competence and cost) and the time savings that emerge from more efficient agentic execution and modular context reuse.

“alignment artifacts” will become the center of gravity of production and become the hotspot of coordination headwinds. Conceptually, this has always been the case, but in practice “product requirement documents” (PRDs) or “design docs” get stale almost immediately as the building process progresses. This “drift” is almost never corrected and it’s considered part of the cost of doing business because the “software” is really the value, these artifacts are merely means toward a building end. This changes dramatically if the “spec → build” period shrinks from months to hours. Drift between “spec” and “code” will turn toxic really quickly if humans fail to rely on reading code to understand behavior (and soon they will because the code will be impenetrable to them as assembly language created by compilers). Keeping specs and code aligned is something we have always considered “nice to have” until now but I feel it will become imperative for increasing the compounding of internal build loops.

“context management” and “agentic loops design” will become engineering disciplines on their own. Things are moving too fast now for the dust to settle but soon we will have loop shapes that become “best practices” and will be baked into outer loops or orchestration systems (Claude Code does a little of this with its “plan” mode). I suspect a lot of them will feel like expansion of OODA loops.

Also worth pointing out how models don’t need to get smarter for all this to start happening. We are already there, just very unequally distributed. Claude Opus 4.6 is already a much better coder than I will ever be. It’s very much not a better software engineer tho… but not for lack of intelligence. It’s the lack of personal taste, personal experience, proactive versatility, sample efficiency, solidly consistent continual learning and, last but not least, direct accountability.

Proactivity is something we are starting to see emerging (both OpenClaw and Schmux have features of this kind) but accountability is structurally incompatible. Their continual learning abilities are crude and kludgy, and their sample efficiency for behavioral adaptation is weak even in the best cases. We are not in a comfortable place when disaster is one “context compaction” away.

Next Steps

I’ve spent the last several weeks working on Schmux as a way to 1) kick the tires to agentic engineering and 2) force myself to participate in a “multiplayer factorio” scenario and see what tools we are missing that can provide the environment scaffold we need to simplify the job and prevent pain and traffic.

Features like “lore” and the “floor manager” are my attempt to understand how build compounding loops as part of the agentic outer loop. It’s early days but it’s exciting because nothing is written in stone yet and we get to explore and invent new ways of building software together.