English as a Programming Language

In which we evaluate the benefits and risks of using natural language to drive repeatably consistent machine operations.

The most surprising emergent property of large language models is the ability to steer their behavior by adding instructions in natural language (aka “in-context learning” or “few-show learning”).

It took a few years for the dust to settle on this but now most people realize that this means that English can be considered a programming language for LLMs.

More and more machine instructions are being encoded into English and submitted to version control and treated as code.

This might feels obvious but has surprisingly non-obvious consequences.

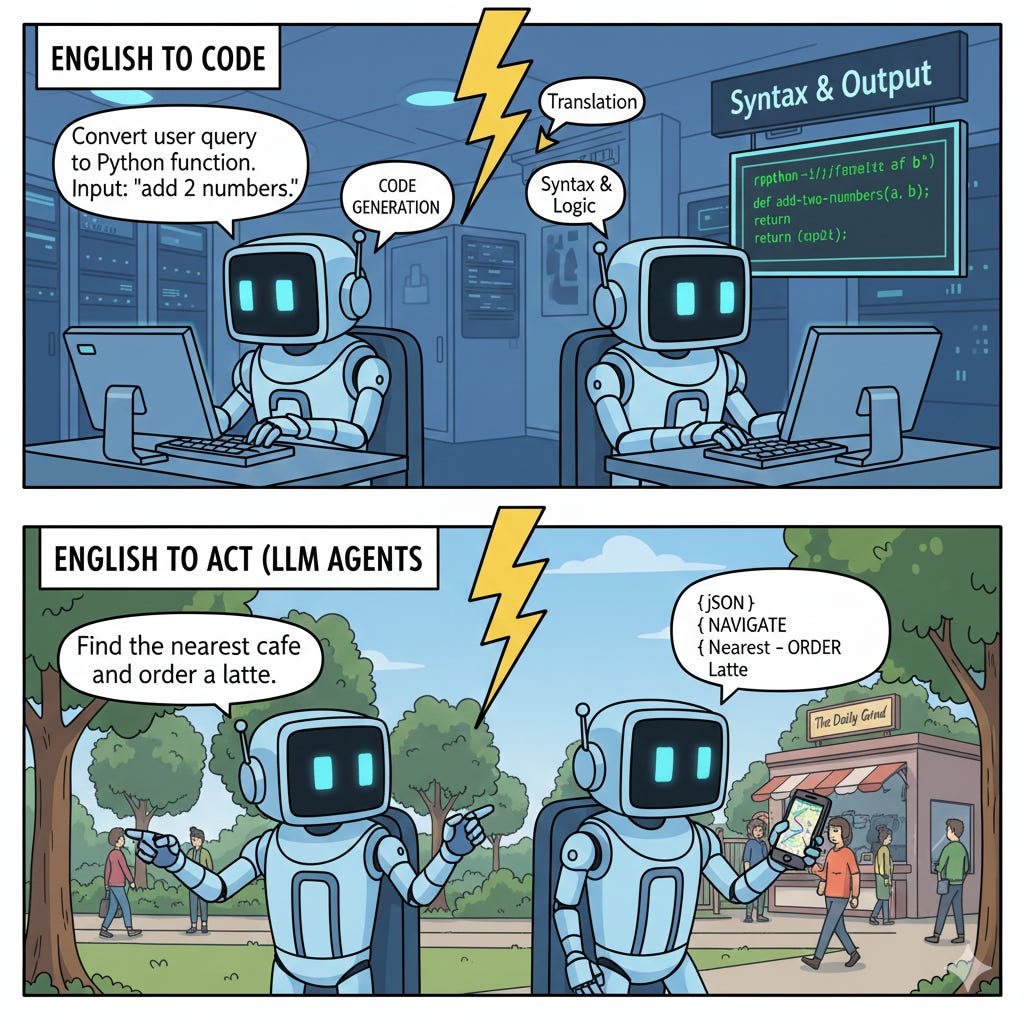

English to code vs. English to act

Let’s say that we’re working on a few feature for our favorite mobile app and we want to make sure that it does what we want on all versions of iOS and Android in the hands of users.

One option is to manually test the change. This is tedious and repetitive but for some things (say, video games) manual QA remains the best way to do it.

Another is to automate such test with another computer program. These are called “integration tests” or “end2end” tests. In practice, these tests are difficult to write because they need to be good at adapting to all the various ways in which the app can behave at runtime. For example, if an unexpected dialog comes up, it’s trivial for a human to click “ok” on that dialog and ignore it, but an automatic test might get stuck and timeout because it has no ability to improvise.

But what if we now tell an agent powered by an LLM what we want it to test and let it figure it out? Instead of having a set of “testing instructions” be executed by a human, we could use reasoning models with tool calling and multi-modal abilities to “run the test” by acting on an emulator and observing the pixels on its screen, just like a human tester would.

In the former case, we use English to steer the behavior of an agent to help us write the code for the test (in a regular programming language). In the latter, we use English to describe the test we want and the commands available, and let the agent figure out how to perform the test (just like we would with human testers).

Letting go of determinism

The very reason why we write computer programs is that:

the same operation can be performed over and over, often more cheaply, consistently and reliably than we could otherwise (aka scalability)

we get the same result every time we run the same operation (aka determinism)

There is no question that machines are better at performing the same operation over and over. They don’t get bored, they don’t get tired, they don’t get annoyed by mindless repetition. LLMs are no exception to this (even if tokens are more scarce and thus more expensive than CPU instructions).

But one thing that is different is that LLMs are inherently probabilistic machines and we value them over strictly coded programs precisely for their ability to be flexible in executing their instructions and being more flexible and able to improvise.

So, in our end2end test case, we like the ability for a tester to be able to improvise and route around unforeseen obstacles but we can’t have that ability without also letting go of determinism: the automated tester sometimes will fail simply because it fell off the beaten path and got lost, not because the change didn’t behave as expected.

We are used to call indeterministic tests “flaky” and we remove them from our CI system because they can give us false positives. They “cry wolf” and make us believe that changes to our program made it fail the test while, in fact, our changes were fine but it was the test that was noisy. This is generally frowned upon because it wastes development resources.

English for humans vs. English for machines

But let’s say we bite the bullet and let go of determinism, the next new problem is that now we have files that contain English that are load-bearing for agent-powered systems to function, and we have English that is not.

Crisp example: our project README.md file vs. our project CLAUDE.md file. They are both markdown, they are both English, they are both useful and it’s valuable to keep them up to date, but fixing a problem in a readme feels like a nice to have, while fixing the core instructions to our agent feels like a much bigger deal in terms of value and urgency.

But now, imagine that we stumble upon a project that contains 1000 markdown files.

Are these docs or are they programs? How can we be sure? Are they all isolated programs (like tests would be) or they reference each other (like a set of reusable modules/libraries)?

And what happens if we are asked to review a change to one of them? How can we tell if a change is beneficial? How can we tell if there are negative side effects we might not want? And how can we tell if the benefit of the change is more than the problems it might cause?

For computer programs written in deterministic structured languages, we have compilers, linters, tests and, hopefully, CI/CD systems that tie them all together and help us predict the potential negative externalities of a change.

But what do we do if we need to evaluate the utility/risk a PR that touches one of these English programs?

From Testing to Experimenting

Back in the days of boxed software and master copies, software was very much like movies or music records: there is a release date and we had to be damn sure that the software product was as good as we could make it before sending to the printers that would make a large number of copies.

The internet changed all that. Not only updates were cheaper, but, in extreme cases, every web page was a complete just-in-time download of a full application. Refreshing the page potentially got us a different version.

This also meant that we could send different releases to different users and let them “try out” changes and then revert them back if the changes didn’t work as expected (and often they wouldn’t even realize this was happening).

This allowed the creation of A/B experiments: we partition our user population in two groups, A and B, we give the “treatment” to A and we don’t give it to B (the control group). We measure the same metric on both group A and B and obtain a difference between the two groups. Crucially, with some statistics magic, we also obtain a measure of the “confidence” of that result (basically, a measure of how likely it was that result we obtained was the result of chance, rather than caused by a real difference).

This is a non-deterministic task that can only be performed with statistical inferencing. This seems to suggest that running A/B experiments for changes on these English programs would be a natural match given the uncertainty about them and the non-deterministic nature of their execution.

But experiments are expensive and difficult to plan and execute. Does it mean that if we interpret English code on probabilistic machines, we are destined to choose between YOLOing our changes and testing in prod or expensive A/B experimentation?

Testing in prod

Let’s imagine that our English program is instructions for our coding agent on how to write good tests.

Now let’s imagine that we receive a PR for these test quality rules and we need to review it and approve it. If it fixes a typo, it seems unlikely that it would cause problems. The approval seems cheap and simple even without any downstream behavior validation.

But now let’s imagine that the PR modifies the instructions, maybe adding additional test quality constraints and giving examples of such sloppy tests in a particular programming language.

The changes look reasonable to our eyes and for that programming language… but how do we know if there are negative side effects we can’t easily foresee on everything else? How do we rule that out?

One option is to put this change behind a flag and distribute the flag with our internal A/B system. We let the system run for a while until we get statistically significant results this would work. Then approve is the lift was positive and reject if negative.

But there are practical issues with this:

we might not have the ability to “gate” a change to a markdown file behind a flag powered by our A/B experiment system

the number of “treatment exposures” we can expect is significantly smaller than the kind of exposure volume our experimental systems are designed to handle. Our experiment population is “people writing tests with agents” which is never going to be much pass the tens of thousands even in the biggest software companies in the world.

Even if we manage overcome these issues and run the test, it might need to run for a while (weeks if not months) to have enough enough statistically-significant resolution power to tell about minor negative externalities. Ain’t nobody got time for that!

In practice, this means that we’d end up taking the risk and YOLO the change, effectively testing it in prod.

Now imagine all sorts of English programs becoming load-bearing for the internal production systems we depend on and thousands testing those changes in prod.

This doesn’t feel like a happy place of agents making people more productive, but a traffic jam of everyone stepping on everyone else’s toes.

Can we do better?

The Hermetic Problem

Every prompt a user runs on ChatGPT is effectively an English program, but those programs are ephemeral, generally write-once and, as such, they don’t need things like version control, review and testing. Humans certainly never felt the need of a CI/CD system to talk to each other.

User-facing agents have such kind of high blast radius English programs (say, system prompts, or integrity/alignment instructions). But we’re now starting to develop English programs that are repeatable instructions but they are shared among fewer people. A group, a team, an organization.

The blast radius is big enough to cause risks of disruption but small enough that A/B experimentation feels overkill. This is a tricky place to be.

But there is another big issue: we need the validation to be hermetic, meaning that all of its inputs should be isolated and reproducible.

Let me show you what it means by going back to our test quality instructions.

One obvious way to perform some sanity checks is to come up with a list of PRs, some with good tests, some with sloppy tests and make sure that we re-run the validation after we change the instructions and get the same results.

The problem with this is that while the content of the PR is frozen in time, the code around the PR is not. The test might be included in the PR but the code under test is not. This means that the agent need to fetch this code from the repo (likely using an MCP tool or just reading from the file system), but it needs to make sure do at the same time that PR was committed.

For systems like git, this is trivial: just “git checkout” that commit and all of the local files will be in the right state. But in general, it might be difficult to re-evaluate English programs hermetically such that the value of the evaluation stays the same over time.

Specifically, if evaluating our instructions requires fetching context via MCP tools, all of those tools need to be modified with a “checkpoint timestamp” that is able to make them behave just like they would have if they had been called at that timestamp (when the result of our original eval was recorded).

As MCP tools become the “loose data ties” of organizations (sort of like fuzzy web services), an effort to make sure that they are all “timestamp compliant” feels like a very expensive task that very few will have the organizational power and political capital to execute successfully.

It is far more likely that these kind of evals are destined to decay in value over time as the context reproducibility will not be hermetic, similar to how snapshot testing in Jest works.

So now what?

I wrote this post mostly to clear my own thoughts about all this because I think that this is going to be one of software engineering challenges of the near future: natural language will become load-bearing execution infrastructure but without the safety nets we’ve built for structured languages.

There is a fundamental tension: we value LLMs precisely because they’re flexible and can improvise and adjust, but that same flexibility means we lose the determinism that makes traditional software engineering practices work. For decades, we’ve treated reproducibility as the foundation of trustworthy systems. Now we’re building systems where non-reproducibility is a feature, not a bug.

I think we can do better than creating traffic jams of everyone stepping on everyone else’s toes with their AI-powered changes. But I also think it will require accepting that English programs are a fundamentally different kind of artifact than traditional code.

The goal shouldn’t be to make English programs behave like regular deterministic programs. It’s to build a new set of practices, tools, and intuitions that are native to the probabilistic, context-dependent, semantically-rich nature of natural language.

We need to be essentially inventing a new discipline and I have no idea what it looks like but I feel it’s going to be messy for a while.